The Smart Grid Just Got a Whole Lot Smarter

The U.S. energy grid loses roughly 5% of all the electricity generated through inefficient transmission and distribution systems.

In less developed countries, like Haiti and Iraq, over 50% of the energy generated is lost en route to consumers. More electricity must be generated to fulfill requirements to compensate for these losses. On a global scale, this additional energy, often called “compensatory emissions,” is largely generated by fossil fuels and amounts to nearly a billion metric tons of carbon dioxide equivalents annually.

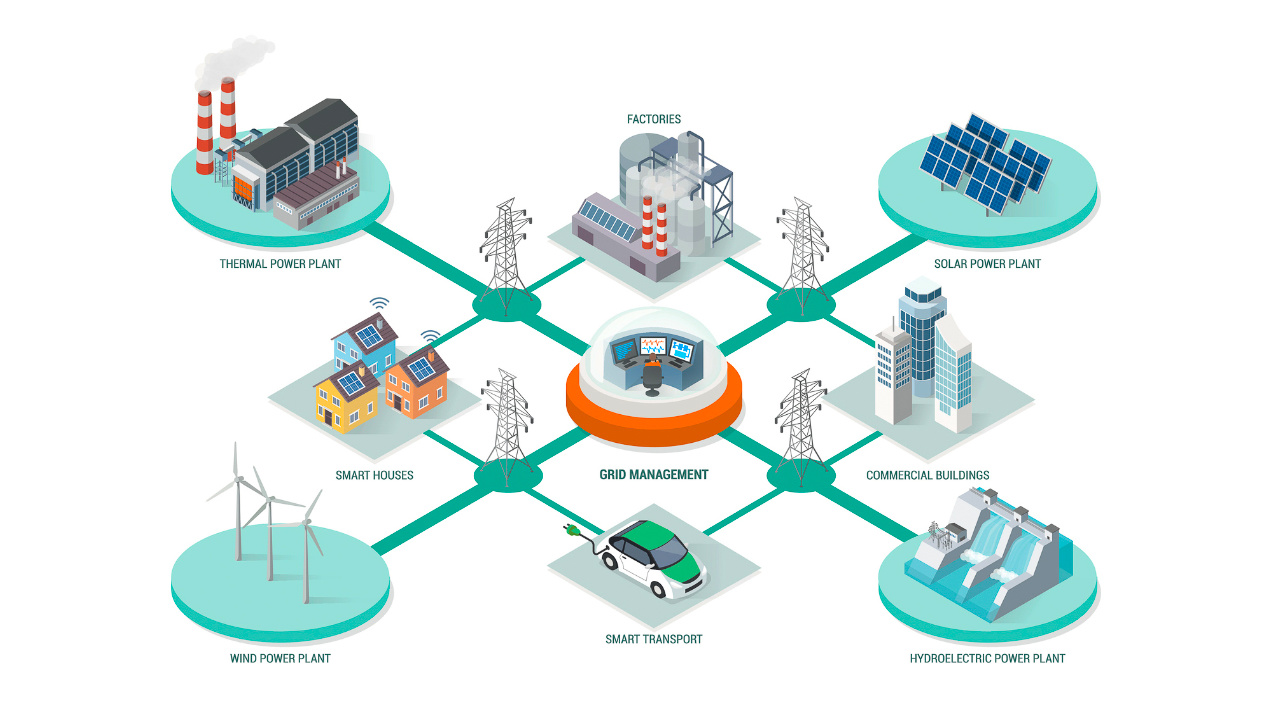

Now, as countries have a heightened focus on reducing their carbon footprint to slow climate change, decarbonizing the energy sector has become a priority. This starts with improving the grid infrastructure to minimize wasted energy and to accommodate renewable energy sources such as wind, solar, and hydropower.

The U.S. Department of Energy (DOE) recognizes these challenges. It has dedicated hundreds of millions of dollars to support the Grid Modernization Initiative to improve grid operations, ensure reliability and security, and integrate renewables.

The reality is the U.S. energy grid is dated and is facing a future that it is not designed for. Indeed, there is a great need for innovative solutions to improve the efficiency and flexibility of our power grid to support current and future demands while simultaneously reducing emissions.

Current State

The U.S. energy grid relies on nearly 10,000 generation plants, 600,000 miles of transmission lines, and roughly 5.5 million miles of local distribution lines to deliver electricity to consumers. Most of this transmission and distribution infrastructure is old and lacks real-time data analysis, modern condition monitoring, and automated communication systems.

The transmission grid has historically operated in a static and passive manner. According to WATT-Transmissions, “Transmission operators traditionally use fixed ratings, based on planning calculations; fixed settings, without power flow controls; and fixed topology/configuration, using normal (planning) open/close breaker status. This fixed grid topology was appropriate to deliver power from large central power plants to load, but greater flexibility is required as the generation mix shifts to variable and renewable sources.”

This operating system has resulted in unavoidable grid congestion, which leads to wasted energy that costs U.S. consumers over $6 billion in additional energy expenses annually. Although implementing and expanding new transmission facilities would help reduce congestion, it is costly, time-consuming, and difficult to obtain permits.

Adopting innovative new technologies, such as the MEMCPU™ Platform, can help optimize delivery over the existing network today, representing a solution capable of dramatically reducing congestion while complementing grid expansion and integration of renewables.

Optimal Power Flow

Optimizing the flow of power to consumers where and when it’s needed is a critical challenge for energy companies today. Commonly referred to as the Optimal Power Flow (OPF) problem, it represents the challenge of determining the best-operating levels for electric power plants to meet demands given throughout a transmission network while minimizing operating cost.

Ultimately, the goal is to deliver energy to their consumers in the most efficient, reliable, and affordable way possible. However, the complexity of this problem is growing as more distributed energy resources and renewables penetrate the market. Although “smart” meters and advanced distribution devices are being adopted, they lack the computing power required to support real-time, optimal power flow operations. Therefore, they rely on approximate solutions to distribute energy and waste energy in the process. Specifically, they are non-suitable for large and difficult OPF problems, which are highly nonlinear and multimodal optimization problems, which results in current methods being trapped in local minima.

But that’s not the only challenge…

Integration of Renewables

According to the National Renewable Energy Laboratory (NREL), renewables now make up more than 20% of the energy produced in the United States annually. As they continue to make headway into the market, integrating them across the grid has become difficult to manage and control.

Renewable energy technologies like windmills and solar panels take up large amounts of real estate and are often located far from the cities they would serve. The distance, paired with the fact that they require the right weather to produce electricity, makes renewable technologies more difficult to control than fossil fuel-based technologies; that is, you can’t tell the wind turbine to turn when there’s no wind!

This presents various technical challenges that hinder our ability to safely connect them to the grid to ensure stability and reliability. Surges in demand can strain the capacity of current transmission stations, creating more congestion, thus leading to more waste. Additionally, intermittent generation from renewables challenges its reliability and consistency of supply.

Solution

The MEMCPU Platform represents a novel optimization technology that bypasses classical limitations when solving highly complex optimization problems such as OPF. The MEMCPU Platform enables ultra-efficient computation, rendering optimal solutions in near real-time at the scale required by the energy sector.

The MEMCPU Platform can identify and utilize hidden transmission capacity, reduce power flow on overburdened lines, and reconfigure existing grid elements to optimize various operational scenarios, including those to support renewables.

With MemComputing, energy providers can now identify and react effectively to changes in supply and demand to ensure our energy systems operate in the most efficient, reliable, and secure manner possible.

In addition to optimizing power flow, the MEMCPU Platform can improve grid operations through applications such as:

- Grid design

- Energy storage

- Unit commitment

- Energy trading

MemComputing’s team is ready to advance your competitive edge with a tailored optimization solution. Explore a free account today or contact us directly at [email protected] to get started.

For more information on MemComputing and our technology, please visit www.memcpu.com.